Here are some of the posts that caught my eye recently. Hope you find something interesting.

- France Set to Allow Police to Spy Through Phones. (Lemonde)

- Your Most Ambivalent Relationships are the Most Toxic. (NYT)

- Is Positive Psychology Science or Snake Oil? (PsychologyToday)

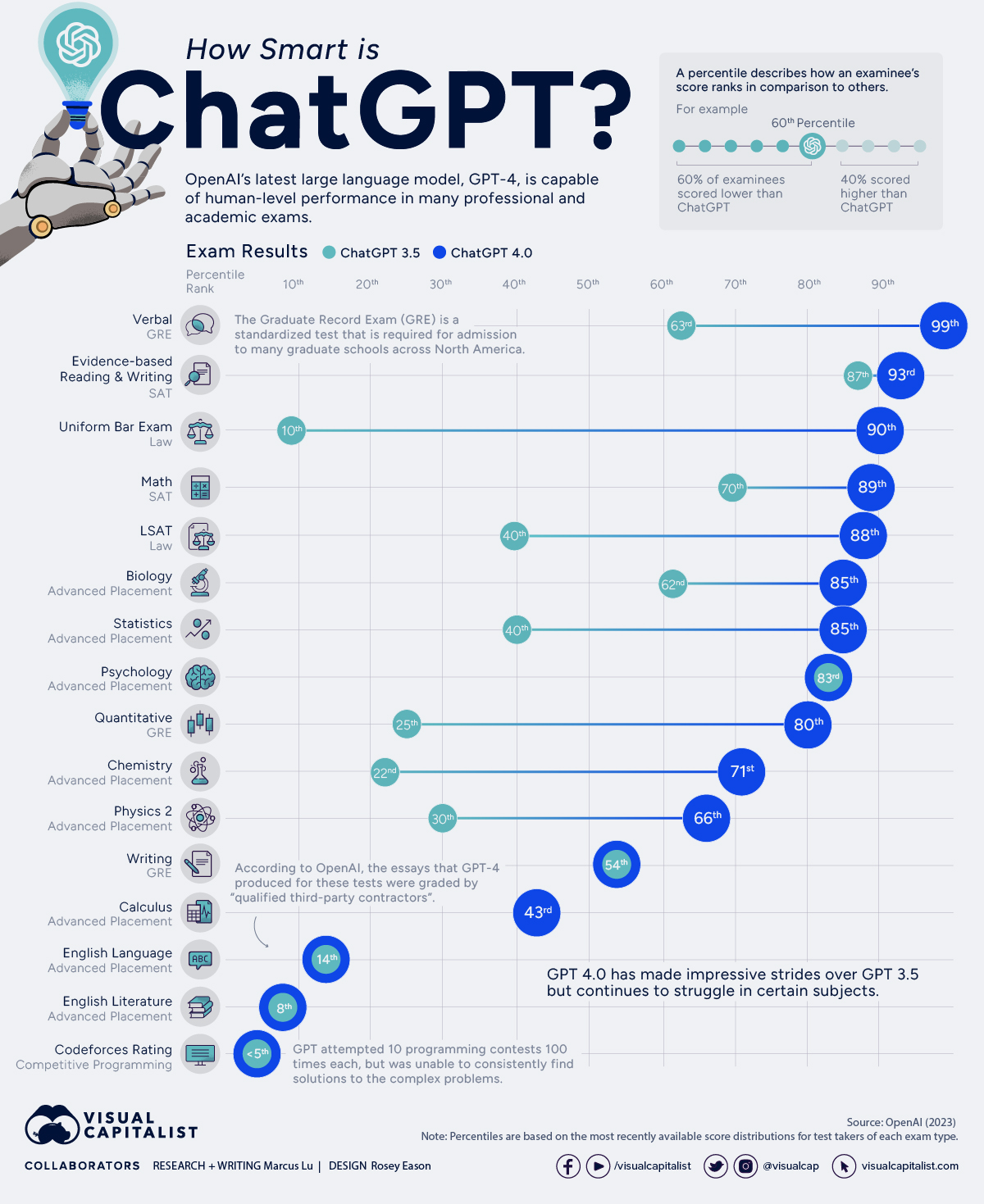

- Einstein and Musk Chatbots Are Driving Millions to Download Character.AI. (Bloomberg)

- New Report Warns of Brain Control Weapons Being Developed by Communist China. (DailyWire)

- The Copyright Battles Against OpenAI Have Begun. (Quartz)

- Baby Boomers Own Pretty Much Everything - But Millennials Could Be About to Catch Up. (Markets)

- Forbes World's Billionaires List 2023: The Top 200. (Forbes)

- Wall Street Soothsayers Are Bewildered About What's Next. (Bloomberg)

- SVB, BBBY, Lordstown Lead List of US Bankruptcies as Companies Fold up at the Fastest Pace Since 2010. (Markets)

Pornhub via

Pornhub via

Rewriting The Past, Present, and Future

In an ideal world, history would be objective; facts about what happened, unencumbered by the bias of society, or the victor, the narrator, etc.

I think it's apparent that history as we know it is subjective. The narrative shifts to support the needs of the society that's reporting it. History books are written by the victors.

The Cold War is a great example where, during the war, immediately after the war, and today, the interpretation of the causes and events has all changed.

But while that's one example, to a certain degree, we can see it everywhere. We can even see it in the way events are reported today. News stations color the story based on whether they're red or blue, and the internet is quick to jump on a bandwagon even if the information is hearsay.

Now, what happens when you can literally rewrite history?

That's one of the potential risks of generative AI and deepfake technology. As it gets better, creating "supporting evidence" becomes easier for whatever narrative a government or other entity is trying to make real.

On July 20th, 1969, Neil Armstrong and Buzz Aldrin landed safely on the moon. They then returned to Earth safely as well.

MIT recently created a deepfake of a speech Nixon's speechwriter William Safire wrote during the Apollo 11 mission in case of disaster. The whole video is worth watching, but the speech starts around 4:20.

MIT via In Event Of Moon Disaster

Media disinformation is more dangerous than ever. Alternative narratives and histories can only be called that when they are discernible from the truth. In addition, people often aren't looking for the "truth" – instead, they are prone to look for information that already fits their biases.

As deepfakes get better, we'll also get better at detecting them. But it's a cat-and-mouse game with no end in sight. In Signaling Theory, it's the idea that signalers evolve to become better at manipulating receivers, while receivers evolve to become more resistant to manipulation. We're seeing the same thing in trading with algorithms.

In 1983, Stanislav Petrov saved the world. Petrov was the duty officer at the command center for a Russian nuclear early-warning system when the system reported that a missile had been launched from the U.S., followed by up to five more. Petrov judged the reports to be a false alarm and didn't authorize retaliation (and a potential nuclear WWIII where countless would have died).

But messaging is now getting more convincing. It's harder to tell real from fake. What happens when a world leader has a convincing enough deepfake with a convincing enough threat to another country? Will people have the wherewithal to double-check?

Lots to think about.

I'm excited about the possibilities of technology, and I believe they're predominantly good. But, as always, in search of the good, we must acknowledge and be prepared for the bad.

Posted at 08:51 PM in Business, Current Affairs, Gadgets, Ideas, Market Commentary, Personal Development, Science, Web/Tech, Writing | Permalink | Comments (0)

Reblog (0)