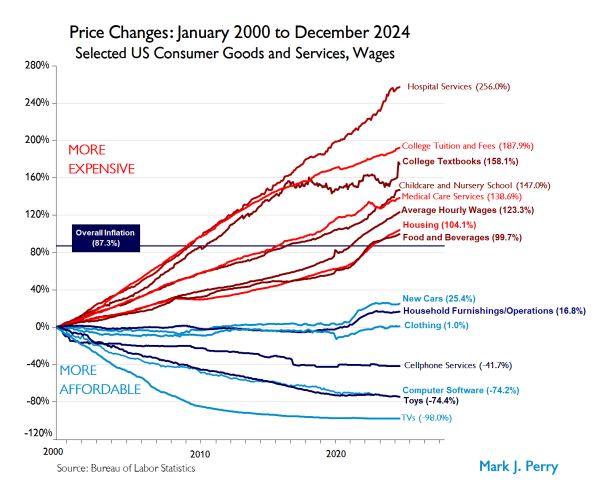

In an era of economic uncertainty, few visualizations have captured the attention of economists, policymakers, and everyday consumers like the “Chart of the Century” created and named by Mark Perry, an economics professor and AEI scholar. This chart tracks the dramatic shifts in consumer prices across various sectors of the American economy over a quarter-century, revealing patterns that challenge conventional wisdom about inflation, purchasing power, and economic well-being.

The most current version reports price increases from 2000 through the end of 2024 for 14 categories of goods and services, along with the average wage and overall Consumer Price Index. Here are the key findings.

- Wage growth has outpaced inflation by a significant margin (123.3% vs. 90%) from 2000 to 2024, resulting in a 16.1% increase in real purchasing power.

- Sharp divergence exists between sectors: Technology and tradable goods have become much cheaper, while healthcare, education, and childcare costs soared.

- Market competition and trade liberalization drive price decreases, while regulated markets and limited competition contribute to price increases.

- Despite objective improvements in purchasing power, many consumers still feel financial pressure due to changing consumption patterns and “quality of life creep”.

- Policy challenges remain in balancing regulation with market forces, particularly in essential services like healthcare and education.

Core Economic Metrics: The Big Picture

The foundation of this analysis rests on three critical metrics that provide context for all other price trends:

|

Metric

|

Change

(2000-2024)

|

|

Consumer Price Index (CPI)

|

+90%

|

|

Average Hourly Income

|

+123.3%

|

|

Real Purchasing Power

|

+16.1%

|

From January 2000 to now, the CPI for All Items has increased by almost 90%. That is a big jump from its 59.6% level in 2019, when I first shared this chart.

These numbers tell a surprising story: despite widespread perceptions of economic hardship, Americans’ wages have grown significantly faster than inflation over these 24 years. This translates to a meaningful increase in real purchasing power – the ability to buy more goods and services with the same amount of work.

However, this aggregate picture masks dramatic variations across different categories of goods and services. Let’s explore these divergent trends.

The price of technology, electronics, and consumer goods — think toys and television sets — has tumbled over the past two decades. Why? These categories benefit from global competition, technological innovation, and manufacturing efficiencies.

Meanwhile, the cost of hospital stays, childcare, and college tuition, to name a few, have surged. Why? These sectors share important characteristics: they are typically non-tradable services (cannot be imported), operate in markets with limited competition, and are often subject to extensive regulation.

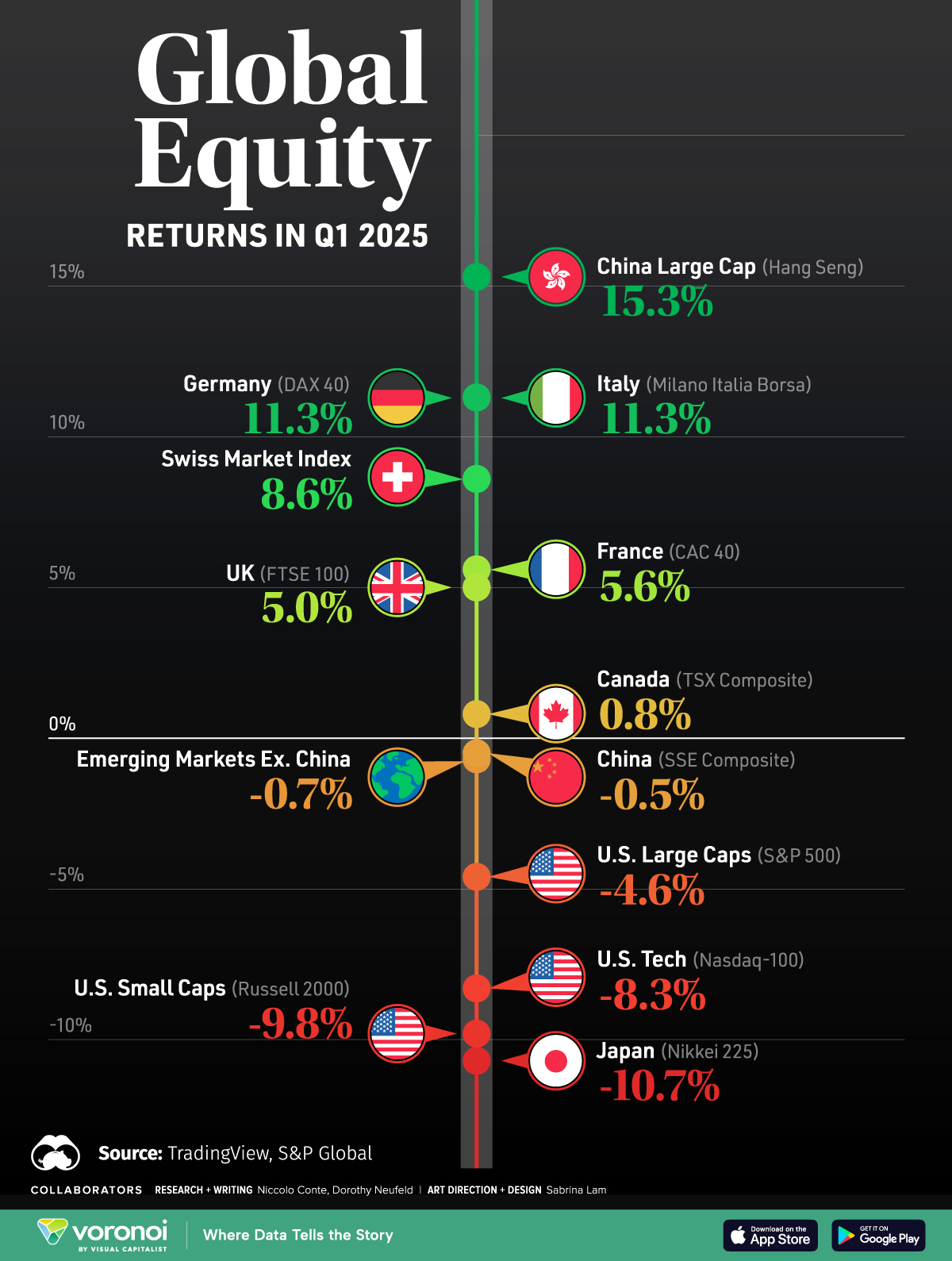

Below is Perry’s Chart of the Century. To help you interpret it better, lines above the overall inflation line have become functionally more expensive over time, and lines below the overall inflation line have become functionally less expensive.

via Human Progress

For context, at the beginning of 2020, food, beverages, and housing were in line with inflation. They’ve now skyrocketed above inflation, which helps to explain the unease many households are feeling right now. College tuition and hospital services also have continued to rise relative to inflation over the past few years.

Market Dynamics: Understanding the Divergence

What explains these dramatically different price trajectories? Here are several (but not all of the) key factors:

Factors Driving Price Increases

- Government regulation creating compliance costs and barriers to entry.

- Quasi-monopolistic markets with limited price competition.

- Non-tradeable services protected from foreign competition.

- Limited technological disruption in certain service sectors.

Factors Driving Price Decreases

- Foreign competition putting downward pressure on prices.

- Technological advancement reducing production costs.

- Manufacturing optimization increasing efficiency.

- Market competition forcing price discipline.

- Trade liberalization expanding access to global markets.

Looking at the prices that decrease the most, they’re all technologies. New technologies almost always become less expensive as we optimize manufacturing, components become cheaper, and competition increases. According to VisualCapitalist, at the turn of the century, a flat-screen TV would cost around 17% of the median income ($42,148). Since then, though, prices fell quickly. Today, a new TV typically costs less than 1% of the U.S. median income ($54,132).

We should also consider the larger trends. For example, In 2020, I asked what Coronavirus would do to prices ... and the answer turned out to be way less than expected. If you don’t look at the rise in inflation but instead the change in trajectories, very few categories were heavily affected. While hospital services have increased significantly since 2019, they were already rising. There were some immediate impacts, but they went away relatively quickly.

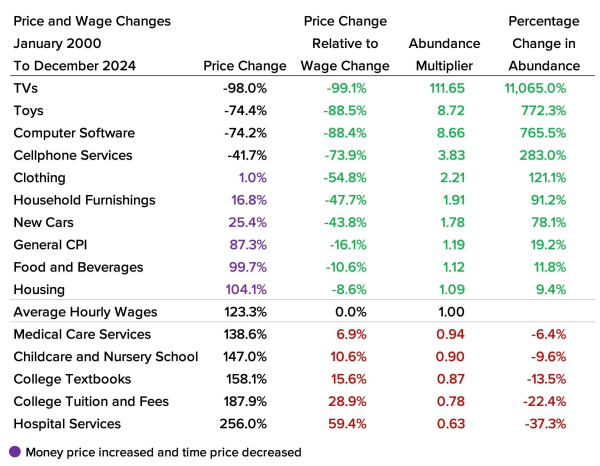

Another thing to consider is average hourly income. Since 2000, overall inflation has increased by 87.3%, while average hourly income has increased by 123.3%. This means that hourly income increased 38% faster than prices (which indicates a 16.1% decrease in overall time prices). You get 19.2% more today for the same amount of time worked ~24 years ago. This represents a mild increase in abundance since last year.

via Human Progress

Although 10 of the 14 items rose in nominal prices over the past 24 years, only five had a higher time price when accounting for the 123.3 percent increase in hourly wages. Those items were medical care services, childcare and nursery school, college textbooks, college tuition and fees, and hospital services.

The Consumer Experience: Perception vs. Reality

It’s interesting to look at data like that, knowing that the average household is feeling a “crunch” right now.

My guess is that few consumers distinguish between perception and reality. However, feeling a crunch isn’t necessarily the same as being in a crunch.

For instance, we must account for ‘quality of life creep,’ where people tend to splurge on luxuries as their standard of living improves. With the ease of online shopping and access to consumer credit, it has become increasingly easy to make impulse purchases, leading to reduced savings and feelings of financial scarcity. This phenomenon is a function of increased consumption (rather than inflation), yet it still leaves consumers feeling like they’re struggling to make ends meet. Our sense of what’s normal has risen, and that’s hard to unlearn.

Perry’s ‘Chart of the Century’ reveals the complex relationships between inflation, consumption, and economic growth. While households may feel financial strain, the data shows that income has outpaced inflation, and technology has made many goods more affordable. Nonetheless, there is still a real sense of economic struggle. Especially in these last few months.

Economic Patterns: Regulated vs. Free Markets

A clear pattern emerges when examining the relationship between market structures and price trends.

Regulated Markets (like healthcare and education) tend toward higher prices over time, feature less price competition, and offer limited consumer choice.

Free Markets, show price decreases over time, feature greater competition, and provide consumers more options.

This pattern raises important questions about the role of regulation in various economic sectors and the balance between consumer protection and market efficiency.

With that in mind, how can policymakers address sectors experiencing significant price hikes, such as healthcare and education, without stifling innovation in tradable goods and services?

Future Outlook

Beyond all that, here are three other key trends to watch.

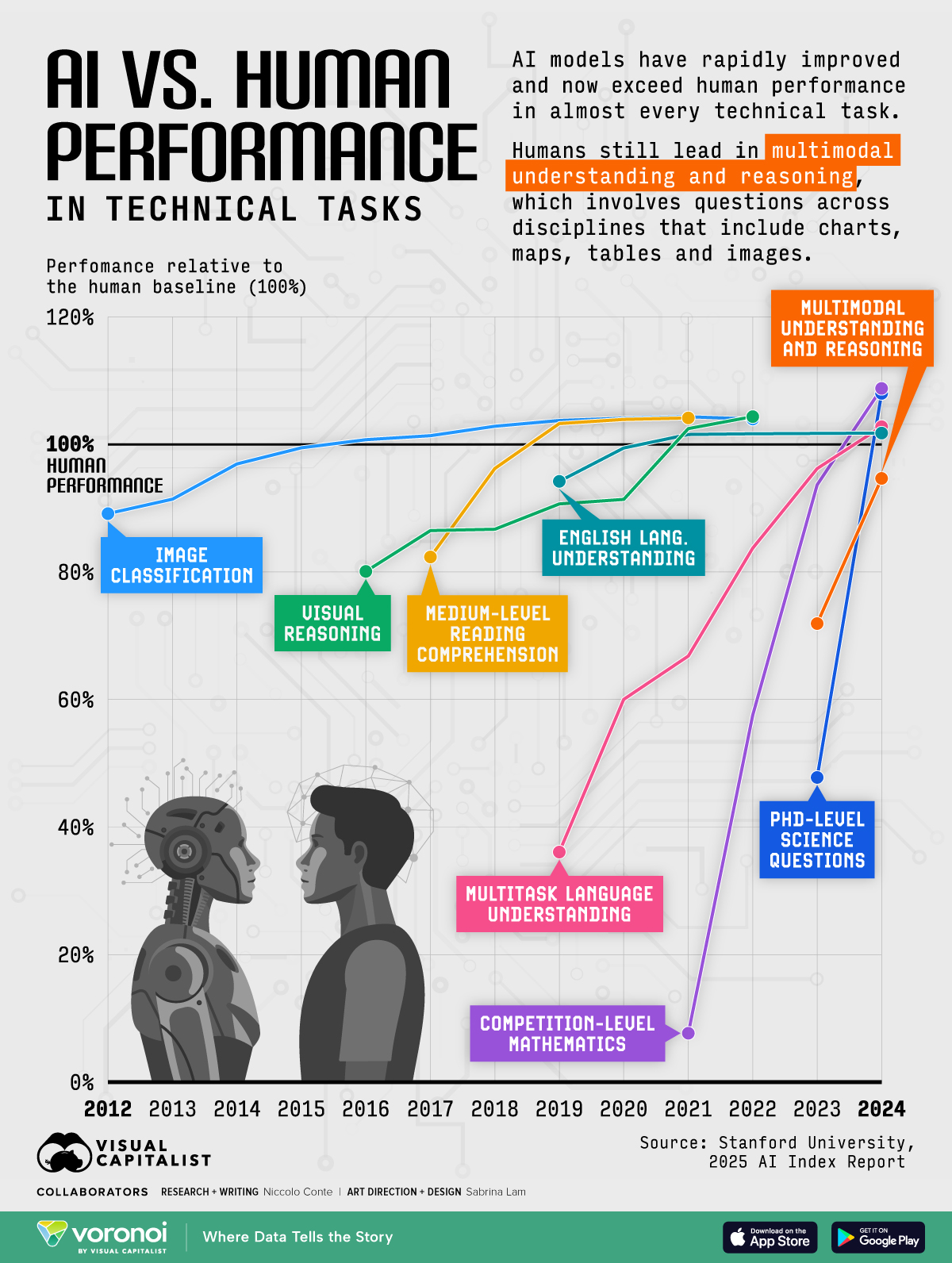

- AI Disruption: Telemedicine and online education could bend healthcare/education cost curves.

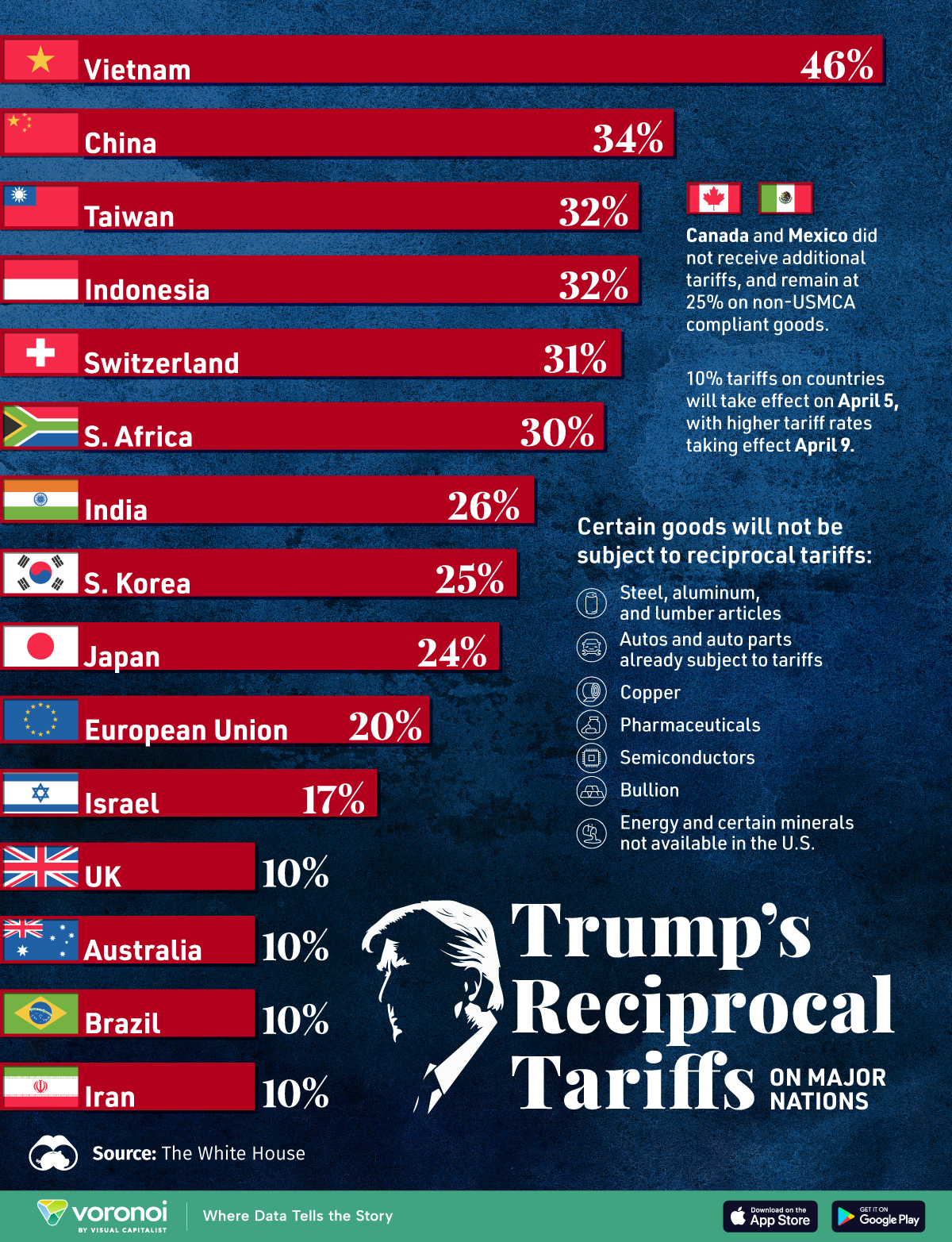

- Trade Wars: New tariffs risk reversing tech price declines (e.g., proposed tariffs on Chinese electronics).

- Generational Shifts: Millennials prioritize experiences over goods, potentially easing service demand.

As we continue to innovate and policy changes, it will be interesting to see if we can make essential services as dynamically competitive as consumer electronics. While America is one of the best countries in the world in countless ways, we do lag behind several countries in healthcare and education.

Onwards.

Global Happiness Levels in 2025

Are you Happy?

What does that mean? How do you define it? And how do you measure it?

Happiness is a surprisingly complex concept comprised of conditions that highlight positive emotions over negative ones. And upon a bit of reflection, happiness is bolstered by the support of comfort, freedom, wealth, and other things people aspire to experience.

Regardless of how hard it is to describe (let alone quantify) ... humans strive for happiness.

Likewise, it is hard to imagine a well-balanced and objective "Happiness Report" because so much of the data required to compile it seems subjective and requires self-reporting.

Nonetheless, the World Happiness Report takes an annual look at quantifiable factors (like health, wealth, GDP, and life expectancy) and more intangible factors (like social support, generosity, emotions, and perceptions of local government and businesses). Below is an infographic highlighting the World Happiness Report data for 2025.

World Happiness Report via Gallup

Click here to see a dashboard with the raw worldwide data.

I last shared this concept in 2022. At the time, we were still seeing the ramifications of COVID-19 on happiness levels. As you might expect, the pandemic caused a significant increase in negative emotions reported. Specifically, there were substantial increases in reports of worry and sadness across the ninety-five countries surveyed. The decline in mental health was higher in groups prone to disenfranchisement or other particular challenges – e.g., women, young people, and poorer people.

Ultimately, happiness scores are relatively resilient and stable, and humanity persevered in the face of economic insecurity, anxiety, and more.

While scores in North America have dropped slightly, there are positive trends.

The 2025 Report

In the 2025 report, one of the key focuses was an increase in pessimism about the benevolence of others. There seems to be a rise in distrust that doesn't match the actual statistics on acts of goodwill. For example, when researchers dropped wallets in the street, the proportion of returned wallets was far higher than people expected.

Unfortunately, our well-being depends on our perception of others' benevolence, as well as their actual benevolence.

Since we underestimate the kindness of others, our well-being can be improved by seeing acts of true benevolence. In fact, the people who benefit most from perceived benevolence are those who are the least happy.

"Benevolence" increased during COVID-19 in every region of the world. People needed more help, and others responded. Even better, that bump in benevolence has been sustained, with benevolent acts still being about 10% higher than their pre-pandemic levels.

Another thing that makes a big difference in happiness levels worldwide is a sense of community. People who eat with others are happier, and this effect holds across many other variables. People who live with others are also happier (even when it's family).

The opposite of happiness is despair, and deaths of despair (suicide and substance abuse) are falling in the majority of countries. Deaths of despair are significantly lower in countries where more people are donating, volunteering, or helping strangers.

Yet, Americans are increasingly eating alone and living alone, and are one of the few countries experiencing an increase in deaths of despair (especially among the younger population). In 2023, 19% of young adults across the world reported having no one they could count on for social support. This is a 39% increase compared to 2006.

Takeaways

In the U.S., and a few other regions, the decline in happiness and social trust points to the rise in political polarisation and distrust of "the system". As life satisfaction lowers, there is a rise in anti-system votes.

Among unhappy people attracted by the extremes of the political spectrum, low-trust people are more often found on the far right, whereas high-trust people are more inclined to vote for the far left.

Despite that, when we feel like we're part of a community, spend time with others, and perform prosocial behavior, we significantly increase perceived personal benefit and reported happiness levels.

Do you think we can return to previous levels of trust in the States? I remember when it felt like both parties understood that the other side was looking to improve the country, just with different methods.

On a broader note, while we have negative trends in the U.S., the decrease is lower than you might expect. The relative balance demonstrated in the face of such adversity may point towards the existence of a hedonic treadmill - or a set-point of happiness.

Regardless of the circumstances, people can focus on what they choose, define what it means to them, and choose their actions.

Remember, throughout history, things have gotten better. There are dips here and there, but like the S&P 500 ... we always rally eventually.

Onwards!

Posted at 10:20 PM in Art, Books, Business, Current Affairs, Food and Drink, Gadgets, Games, Healthy Lifestyle, Ideas, Just for Fun, Market Commentary, Movies, Music, Personal Development, Religion, Science, Sports, Travel, Web/Tech | Permalink | Comments (0)

Reblog (0)