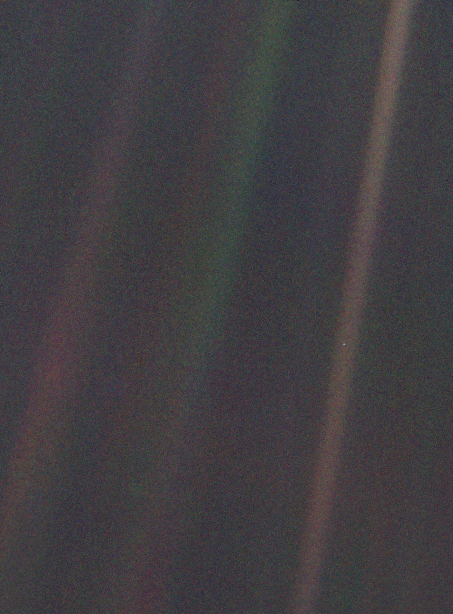

In the age of AI, we’re obsessed with better answers. But the real leverage may come from better questions.

It’s easier to solve someone else’s problem than your own. Why? Because your biases, emotions, and problem-solving frameworks become part of the problem. Likewise, your blind spots likely go unexamined when you’re both the observer and the subject.

As an entrepreneur, I strive to be objective about the decisions I make. Towards that goal, using key performance indicators, getting different perspectives from trusted advisors, and relying on tried-and-true decision frameworks all help.

Mindfulness as a Decision Framework

Combining all three creates a form of “mindfulness” that comes from dispassionately observing from a perspective of all perspectives.

That almost-indifferent, objective approach is also where exponential technologies like AI excel. They amplify intelligence by helping make better decisions, take smarter actions, and continually improve performance.

In 2021, I shot a video about mindfulness and the future of AI. I think it has held up remarkably well.

When I shot this video, AI was still relatively limited.

In just a few years, the technology has come so far. When I originally published the video, I suggested that:

The future of AI will likely be based on swarm intelligence, where many specialist components communicate, coordinate, and collaborate to view a situation more objectively, better evaluate the possibilities, and determine the best outcome in a dynamic and adaptable way that adds a layer of objectivity and nuance to decision-making.

Five years later, that prediction has largely materialized. Multi-agent frameworks, retrieval-augmented generation, and tool-using LLMs now orchestrate specialized components to tackle complex problems. The architecture isn’t identical to biological swarm intelligence, but the principle holds: better decisions emerge from coordinated, specialized perspectives, and from understanding the actual purpose of your tools.

What Hasn’t Changed

AI is a powerful solution for a seemingly infinite number of problems. But, much like the internet, it’s easy to get distracted by shiny objects, flashy intrusions, or compelling answers.

It is important to stay mindful and diligent as you apply AI and AI agents to your business.

Many of my friends are getting excited about these tools, and they’re using them for countless capabilities, but they’re not necessarily doing a good job of evaluating whether they should be.

Sometimes, you shouldn’t even be looking for the right answer, you should be looking for the right question.

The Importance of Better Questions

One of the lessons I teach to our younger employees is that an answer is not THE answer. It’s intellectually lazy to think you’re done simply because you come up with a solution. There are often many ways to solve a problem, and the goal is to determine which yields the best results.

Even if you find THE answer, it is likely only THE answer temporarily. It is a step in the right direction that buys you time to learn, improve, and re-evaluate.

Mindfulness comes from slowing down, stepping back, and looking at something from multiple perspectives, and AI can be a powerful tool for that when used intentionally. It can help us explore different viewpoints, challenge assumptions, and think more broadly.

But the greatest benefit of AI may not be in generating better answers. More often, it comes from helping us ask better questions.

Used mindfully, AI becomes less of a shortcut to conclusions and more of a tool for deeper thinking.

Recently, I’ve started using AI to sharpen my questions, and it’s changing the way I approach problems. At first, that sounds abstract, but in practice it forces a very different kind of thinking. Instead of immediately searching for conclusions, you start asking what actually makes a question “better” in the first place. How do you move from a vague sense of uncertainty to a question precise enough to reveal something useful?

When I’m evaluating a project now, I rarely ask AI something broad like, “Is this a good opportunity?” Questions like that usually produce predictable answers. Instead, I use AI to pressure-test my own thinking. I’ll ask it to identify the assumptions underneath the idea, explore what would have to be true for the project to fail, or point out the questions I haven’t considered yet. The process feels less like outsourcing thought and more like refining it.

That shift — from answer-seeking to question-sharpening — has changed how I handle ambiguity and make decisions. It has also changed what I consider trustworthy. I’ve started building what I think of as a “question pattern library”: prompts and frameworks that consistently help add structure to messy situations. Some questions help clarify the framing by forcing you to define the real decision being made rather than reacting to surface-level symptoms. Others establish criteria, helping determine how success should actually be measured before debating solutions. And some are designed to expose bottlenecks by identifying which assumption, if proven false, would completely change the next step.

Over time, I’ve realized these questions work best when they build on each other. At important checkpoints, I’ll often run through a simple sequence: What became clearer? What does this change? Why does it matter? What’s the next best move? The answers themselves matter less than the way the questions force clearer thinking.

The more I use AI this way, the more I think its greatest value may not be generating better answers at all. Used mindfully, its real strength is helping us examine our own thinking more carefully. Better questions create better distinctions, and better distinctions usually lead to better judgment. So before asking AI for an answer this week, it may be worth asking it to help you frame a better question first. You might discover that the most valuable part of the interaction isn’t the response, but the thinking process that led to it.