Everywhere you look, someone is predicting which jobs AI will eliminate or automate away next. For many people, the real question is more personal: Is my job safe — or will my company survive?

To answer that, it helps to zoom out.

Back in 2018, I asked a simple question: Which industries were most at risk of disruption? This was pre‑AI boom, so the focus was on digitization and automation (rather than large language models or copilots). That article identified the key signals that an industry was ripe for disruption. That simple framework still applies today.

Here’s a brief summary of the findings.

- Digitization Level – Industries like agriculture, construction, hospitality, healthcare, and government were among the least digitized, yet they still accounted for 34% of GDP and 42% of employees.

- Regulation Intensity – In heavily regulated industries, companies that find ways to work around legacy rules can become effective competitors quickly (e.g., Lyft or Tesla).

- Number of Competitors – Crowded markets with excess capacity or wasted resources (like taxis waiting for fares or empty airplane seats) are vulnerable to new business models.

- Automatability – Even in 2018, many industries and tasks were ready to be automated but hadn’t been due to the cost or labor of switching to new technologies.

Ultimately, disruption was about relieving a customer’s headache while lowering costs for the producer, the customer, or both.

Today, AI’s inexorable march is unmistakable as it takes over more tasks and more of the content we create.

In 2024, the WEF evaluated which jobs were most prone to small or significant alteration by AI. IT and finance have the highest share of tasks expected to be ‘largely’ impacted by AI — which is not particularly surprising. Followed by customer sales, operations, HR, marketing, legal, and (lastly) supply chain.

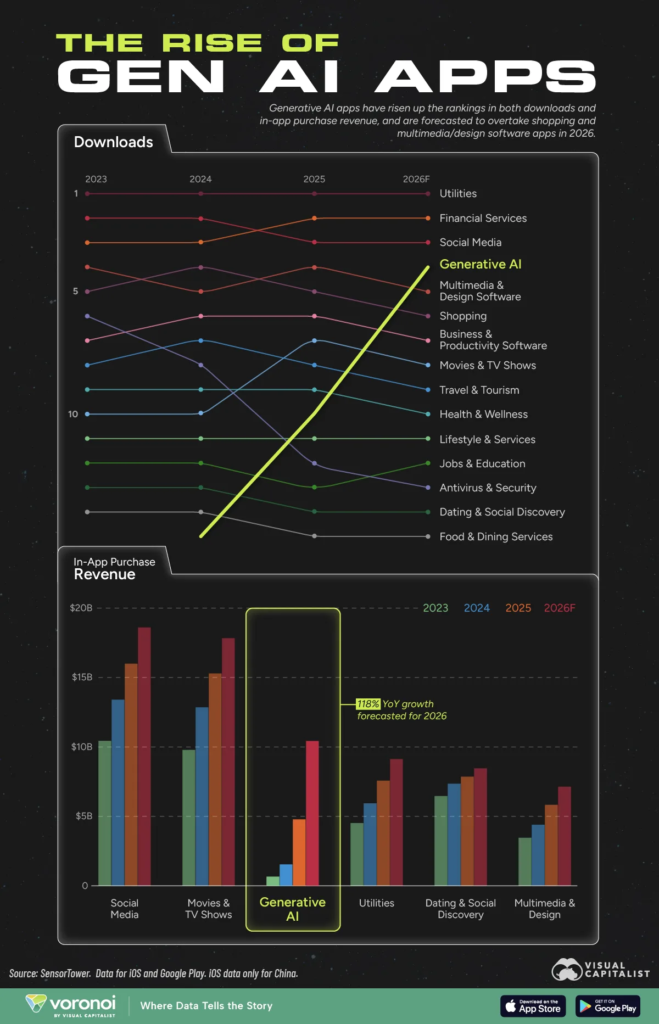

Now, new Microsoft data takes a more granular look at which specific jobs are most exposed to generative AI.

via visualcapitalist

Microsoft assessed AI exposure using three indicators derived from Copilot usage:

- Coverage: How often tasks associated with a job appear in Copilot conversations

- Completion: Frequency of Copilot successfully completing those tasks

- Overall AI Applicability Score: A combined metric indicating how well AI can support or execute tasks within a specific role.

Language-heavy & research-based roles are at the highest risk of disruption. Think roles like interpreters, historians, writers, and customer service.

But exposure does not automatically mean replacement. Augmenting roles with AI will become increasingly common.

Even though creative and communication roles sit near the top, more technical roles will still feel a meaningful impact as well.

Fear not … there is still a place for humans. In many cases, AI functions as a complement rather than a substitute, because these jobs still require judgment, creativity, and human interaction.

Are you using AI in your daily process yet?

At Capitalogix, we focus on amplifying intelligence. To us, that means the ability to make better decisions, take smarter actions, and continuously improve performance. In many ways, it comes down to better real-time decision-making. Practically, that means using technology to calculate, find, or know easy things faster … rather than predicting harder things better.

You don’t have to predict every change. You do have to build the habit of experimenting with AI in the work you already do. The gap between winners and losers will be about learning speed, not job title.

In the next few years, the biggest divide will not be between ‘AI jobs’ and ‘non‑AI jobs.’ It will be between people who learn to wield AI and people who pretend it is not their problem.

A few years from now, when I write a follow‑up to this article, I suspect we will look back and clearly see the gap between winners and losers. It might come down to something as simple as this question:

What are you doing to make sure that you ride the wave, rather than getting crushed by it?