For decades, I’ve been an Early Adopter of technologies. I love exploring tools to get an idea of where things are going and what’s possible.

In part, that means I don’t wait for things to settle down and a clear winner to arrive. Instead, I tend to try several tools that claim to do something that excites me.

On one hand, my wife questions whether this is a waste of time, energy, and money. But the practical realities of technology businesses make it a workable strategy for me in my role.

Companies have different levels of access to talent, opportunities, and resources. Consequently, the first tool that does something cool isn’t necessarily the one that takes off or gets big (or the one that continues to play the game, even if it does so slowly, committed to getting better till it wins). This is especially true in highly contested areas like large language models.

A Look At My AI Usage …

Like many of you, I use many AI tools every day. I pay for ChatGPT, Claude, Perplexity, and Microsoft CoPilot. I also pay for limited subscription access to Google Gemini and Elon Musk’s Grok (and for a host of other useful special-purpose tools like Grammarly, Granola, and Wispr Flow).

For a while, ChatGPT has been my default. Projects tend to start there and end there. It’s been my source of comfort.

Even though I start in ChatGPT, I might then show it to Perplexity and say, “Hey, here’s something I built in ChatGPT. What do you think and what would you change?” This process often results in a new idea or a different perspective. I tend to bring those ideas or perspectives back to ChatGPT, saying, “Hey, Perplexity recommended this … What do you think?”

As you might guess, I’ve tried various iterations of that game. For example, I might start something in Perplexity or Google Gemini … but over time (at least for the type of work that I do), ChatGPT earned its place as my default.

Now, in part, I’m writing this post because Claude has started taking more and more of my cycles: the answers it gives, the user interface, the integrations. It’s really interesting to see how fast it’s improving. Obviously, ChatGPT just released a new interim version to counter the momentum shift Claude is gaining from so much favorable press.

There’s another reason that I know Claude is getting better. It’s still critical of things I produce in other models, but other models are increasingly impressed with what I produce in Claude.

That’s notable because AI systems typically prefer their own outputs. The fact that one model regularly elevates another suggests something else is happening.

Meanwhile, the gap at the top is narrowing. And it’s changing quickly in part because people share outputs from one model with another. This process is a form of cross-pollination that allows LLMs to see (and learn from) a wider range of perspectives and techniques.

So, objectively, which models are really the best? That’s where things get murky. Benchmarks try to answer the question, but they only capture slices of capability.

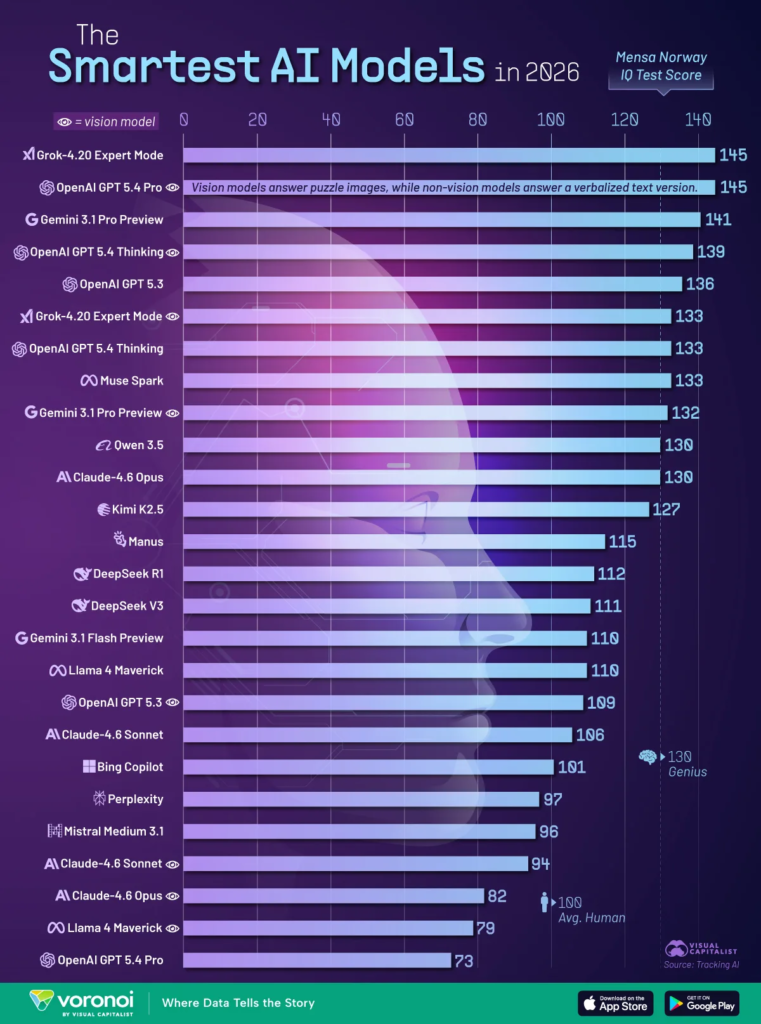

The Smartest AI Models of 2026 … Well, April 2026

via visualcapitalist

Lists like this are less a “stamp of approval” and more like a snapshot in time. Models aren’t just getting better every day; new models based on radically different exponential capabilities are being created and released in shorter time cycles, too.

In the list above, Grok-4.20 Expert Mode and OpenAI GPT 5.4 Pro (Vision) tie for the top spot (based on TrackingAI’s April 2026 Mensa Norway benchmark), each scoring 145. The top tier is becoming more crowded, with leading models separated by just a few points. Scores have increased significantly since 2025, demonstrating the rapid progress in frontier AI reasoning on visual pattern-recognition tests. But even that doesn’t account for the fact that ChatGPT’s version 5.5 was released this week.

While this is only one test of AI capabilities, it’s very interesting to see how close the best models have gotten. It’s also worth noting that in 2025, the highest score was 135.

Meanwhile, use of these tools is skyrocketing.

Using cutting-edge AI isn’t a differentiator anymore — it’s the price of admission. The real question isn’t who has the best AI; it’s who can afford to keep up with the pace of change.

Which raises a more important question than “Who’s winning?”:

Can AI Firms Afford to Keep Up?

Last week, I talked about my eldest son lightly teasing me for still trying to overly direct Claude in performing tasks. It’s not just indicative of me getting older … it’s a broader, faster shift.

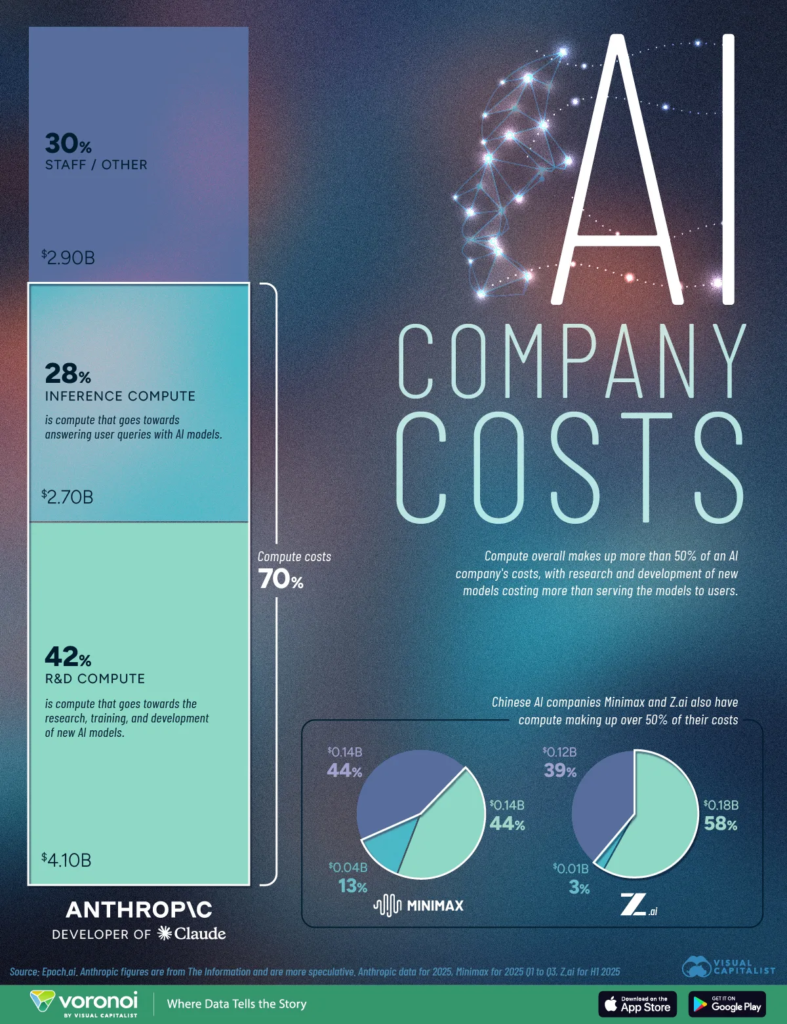

via visualcapitalist

Early AI development was talent-driven. The limiting factor was human capital — researchers, engineers, and domain experts pushing systems forward.

That constraint is shifting. Today, leading AI firms are increasingly defined by access to compute. Training, fine-tuning, and running these models at scale require massive infrastructure investments, often dwarfing even the highest salaries in tech.

Anthropic spent almost $7 billion on compute in 2025.

Talent still matters, but it’s no longer the primary bottleneck.

Can You Afford To Keep Up?

As companies start leaning more heavily on tools like ChatGPT and Claude, the economics get a little less straightforward.

At first, AI feels like a no-brainer. You’re getting more done, faster— emails, summaries, code, all of it. And the cost? It barely registers. A few cents here and there, easy to ignore. But then usage creeps in. And with automation and agents, what was occasional becomes constant. It gets baked into workflows, products, and day-to-day habits.

And since everything runs on tokens, the meter is always running in the background.

Suddenly, AI stops feeling like “free leverage” and starts acting more like a quiet, always-on teammate. A fantastic and efficient teammate … but one that happens to bill you for every task, and more when you ask it to show its work. At that point, it’s not surprising that the costs can stack up to something meaningful. In reality, AI can cost more than human workers now.

That’s not a knock on AI — it’s just the reality of using industrial-grade AI at scale.

It’s easy to think of AI as a pure efficiency gain, something that just improves margins. But in practice, it’s both sides of the equation. It drives output, and it adds cost. The companies building these tools have always known that. Now the companies using them are starting to see it too.

I’m fully committed to AI, and yet I somehow continue to explore even further. But the deeper you delve, the more important it becomes to pause and catch your breath.

Activity isn’t progress if it doesn’t move you in the right direction.

Onwards!

Leave a Reply