Artemis II was a nine-day lunar flyby mission with a crew of four astronauts, launched on April 1, 2026. It was the first crewed NASA-led Artemis flight and the first human journey beyond low Earth orbit since Apollo 17 in 1972.

During their lunar flyby, the crew achieved the record for the farthest distance from Earth by humans, reaching 252,756 miles (406,771 km), surpassing Apollo 13’s previous record of 248,655 miles (400,171 km).

Friday, they splashed down safely in the Pacific Ocean.

“Victor, Christina and Jeremy, we are, we are bonded forever, and no one down here is ever going to know what the four of us just went through … And it was the most special thing that will ever happen in my life.” – Reid Wiseman

This is the kind of story that’s easy to file under ‘space news’ – but for entrepreneurs, investors, and leaders, it’s also a case study in how fast the frontier moves when compounding technology meets long‑term conviction.

As we move forward, we’ll talk more about the emerging business landscape around space (from connectivity and Earth‑observation data to in‑orbit manufacturing, commercial stations, logistics, and even space‑based energy). But today’s piece is really about something more fundamental: marking a milestone on the path and widening our sense of where we are and what’s possible.

From Humble Beginnings …

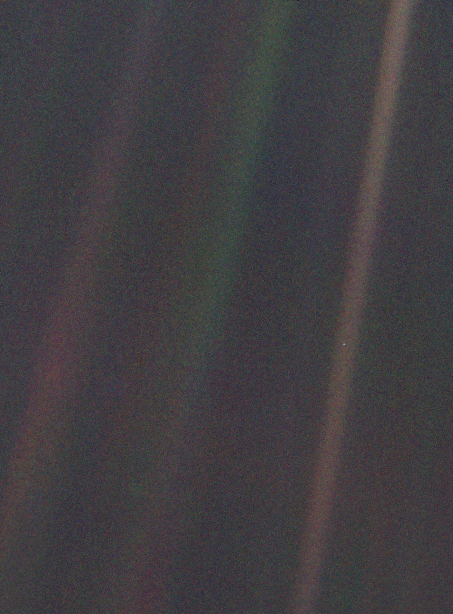

To appreciate how far we’ve come, I think it’s helpful to think about the early days of Space Travel. In 1977, the Voyager 1 launched into space. Just over a dozen years later, the Voyager 1 spacecraft had traveled farther than any spacecraft/probe/human-made anything had gone before. It was approximately 6 billion kilometers away from Earth. At that point, the Voyager 1 was “told” by Carl Sagan to turn around and take one last photo of the Earth… a pale blue dot.

The resulting photo is impressive precisely because it shows so little in so much.

“Every saint and sinner in the history of our species lived there – on a mote of dust suspended in a sunbeam.” – Carl Sagan

Earth is in the far-right sunbeam – a little below halfway down the image. This image (and the ability to send it back to Earth) was the culmination of years of effort, technological advancement, and the dreams of mankind.

Carl Sagan’s Pale Blue Dot speech is still profound and moving. Invest three minutes to watch and listen.

Carl Sagan via YouTube

Here’s the transcript:

Look again at that dot. That’s here. That’s home. That’s us.

On it everyone you love, everyone you know, everyone you ever heard of, every human being who ever was, lived out their lives.

The aggregate of our joy and suffering, thousands of confident religions, ideologies, and economic doctrines, every hunter and forager, every hero and coward, every creator and destroyer of civilization, every king and peasant, every young couple in love, every mother and father, hopeful child, inventor and explorer, every teacher of morals, every corrupt politician, every “superstar,” every “supreme leader,” every saint and sinner in the history of our species lived there–on a mote of dust suspended in a sunbeam.

The Earth is a very small stage in a vast cosmic arena.

Think of the rivers of blood spilled by all those generals and emperors so that, in glory and triumph, they could become the momentary masters of a fraction of a dot. Think of the endless cruelties visited by the inhabitants of one corner of this pixel on the scarcely distinguishable inhabitants of some other corner, how frequent their misunderstandings, how eager they are to kill one another, how fervent their hatreds.

Our posturings, our imagined self-importance, the delusion that we have some privileged position in the Universe, are challenged by this point of pale light. Our planet is a lonely speck in the great enveloping cosmic dark. In our obscurity, in all this vastness, there is no hint that help will come from elsewhere to save us from ourselves.

The Earth is the only world known so far to harbor life.

There is nowhere else, at least in the near future, to which our species could migrate. Visit, yes. Settle, not yet. Like it or not, for the moment the Earth is where we make our stand.

It has been said that astronomy is a humbling and character-building experience. There is perhaps no better demonstration of the folly of human conceits than this distant image of our tiny world. To me, it underscores our responsibility to deal more kindly with one another, and to preserve and cherish the pale blue dot, the only home we’ve ever known.

How powerful a statement from a grainy pixel.

… To New Heights

Today, we have people living in space, posting videos from the ISS, and high-resolution images of space and galaxies near and far. Artemis II shows we’re going back to the moon, and that that’s only the beginning. We also recently talked about the other new goals and explorations already on the proverbial docket.

We take for granted the scale of the technological phase shift. The smartphone in your pocket has more computing power than the systems that first took us to the moon – and it has for decades.

As humans, we’re wired to think locally and linearly. We evolved to live our lives in small groups, to fear outsiders, and to stay in a general region until we die. We’re not wired to think about the billions and billions of individuals on our planet, or the rate of technological growth – or the minuteness of that all compared to the vastness of space.

However, today’s reality necessitates that we think about the world, our impact, and what’s now possible for us.

We’ve created better, faster ways to travel, instantaneous communication networks across vast distances, and megacities. Our tribes have gotten much bigger – and with that, our ability to enact massive change has grown as well.

Space was the proving ground for many of today’s breakthrough technologies. Now, similar waves are building in AI, medicine, genetic engineering, robotics, and even ‘world‑building’—not just in virtual environments, but in how we design cities, companies, and economies. As leaders, our job is to spot these trajectories early, place disciplined bets, and build systems that can adapt as the frontier moves.

It’s hard to comprehend the scale of the universe and the scale of our potential – but that’s exactly why it’s worth exploring. The view from a ‘pale blue dot’ reminds us that most of what feels urgent today won’t matter in a decade, but the systems we build and the bets we make will. This week, ask yourself: where are you still thinking locally and linearly in a world that rewards global, exponential thinking?

Onwards!